Five structural signals in the MemberWise Digital Excellence Report 2026 that senior leaders should not overlook.

[Based on responses from circa 480 membership body managers, directors and department heads across UK professional bodies, trade associations and chartered institutes. Data collected March–November 2025 from https://memberwise.org.uk/dx]

The MemberWise Digital Excellence Report rewards careful reading. Now in its tenth year, it is the most consistent longitudinal dataset available to UK membership sector leaders. Read at face value, its headline figures offer a broadly reassuring picture: acquisition is up, AI adoption is rising, communities are growing, and satisfaction with suppliers has improved. Read structurally, the picture is more complicated. Beneath the positive trend lines sit four compounding vulnerabilities that will widen over the next two to three years if boards and executive teams do not address them deliberately.

This briefing draws out those structural signals and frames the decisions they require.

26%

AI adoption sector-wide (Up from 5% two years ago)

6%

Have a formal AI strategy (20pp gap from the prior cycle)

14%

Tech spend tied to strategy (86% investing without alignment)

19%

LMS integrated with AMS (Despite 7 years of integration focus)

1. Acquisition-led growth without engagement infrastructure is not a viable strategy

New member acquisition has returned to the top priority position. The economic backdrop makes this understandable. Cost inflation, revenue pressure and constrained staffing have concentrated minds on the growth line. But the data, read as a system, tells a more uncomfortable story.

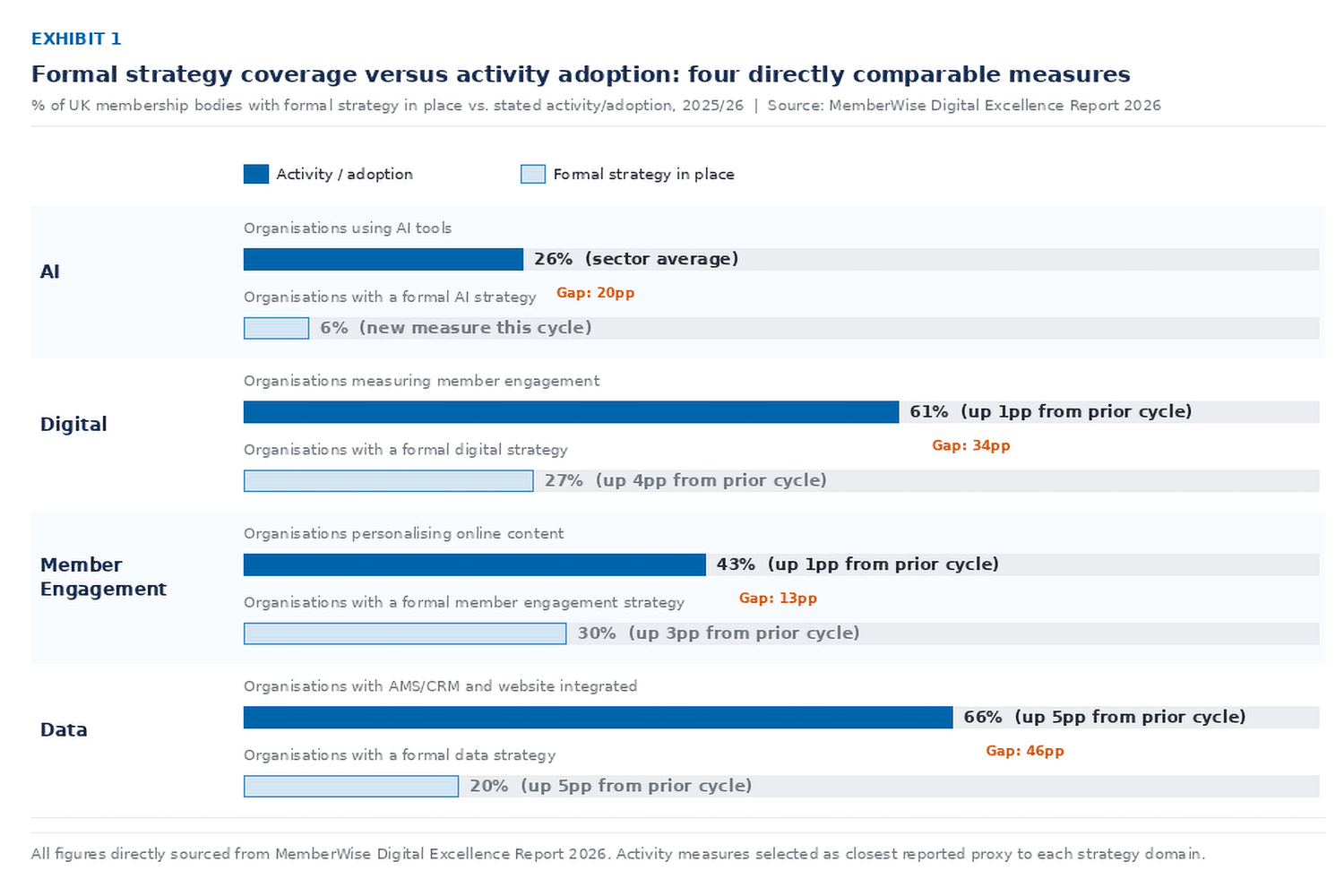

44% of organisations report increased acquisition. At the same time, member engagement, participation and advocacy are all flat. Online engagement – the mechanism through which members derive ongoing value – is the sector’s top unsolved challenge for the second consecutive cycle. Personalisation has barely moved in five years: 43% of organisations personalise content, up just 1 percentage point. And 77% of respondents report rising cost and workload without proportionate resource.

The underlying dynamic: An organisation acquiring members faster than it can engage them is managing a retention liability, not building sustainable membership. The data suggests a material proportion of the sector is in exactly this position. Growth metrics presented to boards without accompanying engagement cohort data are incomplete governance reporting.

The more diagnostic question for senior leaders is not the headline acquisition rate but the renewal pattern. What proportion of members acquired in the previous cycle are renewing without prompting? How does that compare with members acquired through referral versus campaign activity? These questions require engagement and behavioural data that most organisations in this report acknowledge they cannot yet answer.

The report identifies that non-subscription income has receded as a priority – speculating that benefits may be increasingly bundled within membership fees. If accurate, this implies organisations are trading commercial diversification for perceived value density. The medium-term risk is that it increases price sensitivity at renewal and reduces optionality when the proposition needs refreshing.

2. Engagement measurement has been the sector’s top challenge for long enough to be a governance failure

The inability to measure member engagement has been in the report’s top three challenges for multiple consecutive cycles. It has now reached number one. This trajectory matters. A challenge that recurs without resolution is not a capability gap. It is a systemic failure to prioritise and resource a known problem.

The measurement methods in use reinforce the concern. 75% of organisations measuring engagement do so via online surveys; 72% via email marketing tools. These instruments capture declared sentiment at a point in time. They do not capture the behavioural signals that predict future disengagement: declining portal access, reduced CPD completion, falling community participation, reduced event attendance. The organisations that intervene before members lapse – rather than discovering lapse at renewal – do so using behavioural data that the majority of the sector does not currently aggregate.

The structural reason is visible in the integration data. The AMS holds membership history. The LMS holds learning behaviour. The community platform holds peer interaction. The website holds content consumption. Integration between these systems remains partial: LMS integration sits at 19%, unchanged from the prior cycle. Community platform integration is similarly low. Without a unified view of member behaviour across touchpoints, no individual in most organisations can answer a basic question: is this member becoming more or less engaged? Until that question has a reliable answer, engagement strategy will remain reactive rather than predictive.

- Only 61% of organisations measure engagement at all – up just 1pp from the previous cycle.

- Behavioural personalisation (using views, clicks and purchase behaviour) has declined slightly, suggesting reliance on static member profile data is deepening rather than reducing.

- No respondents cited online community tools as contributing to personalisation – despite community data being one of the richest available behavioural signals.

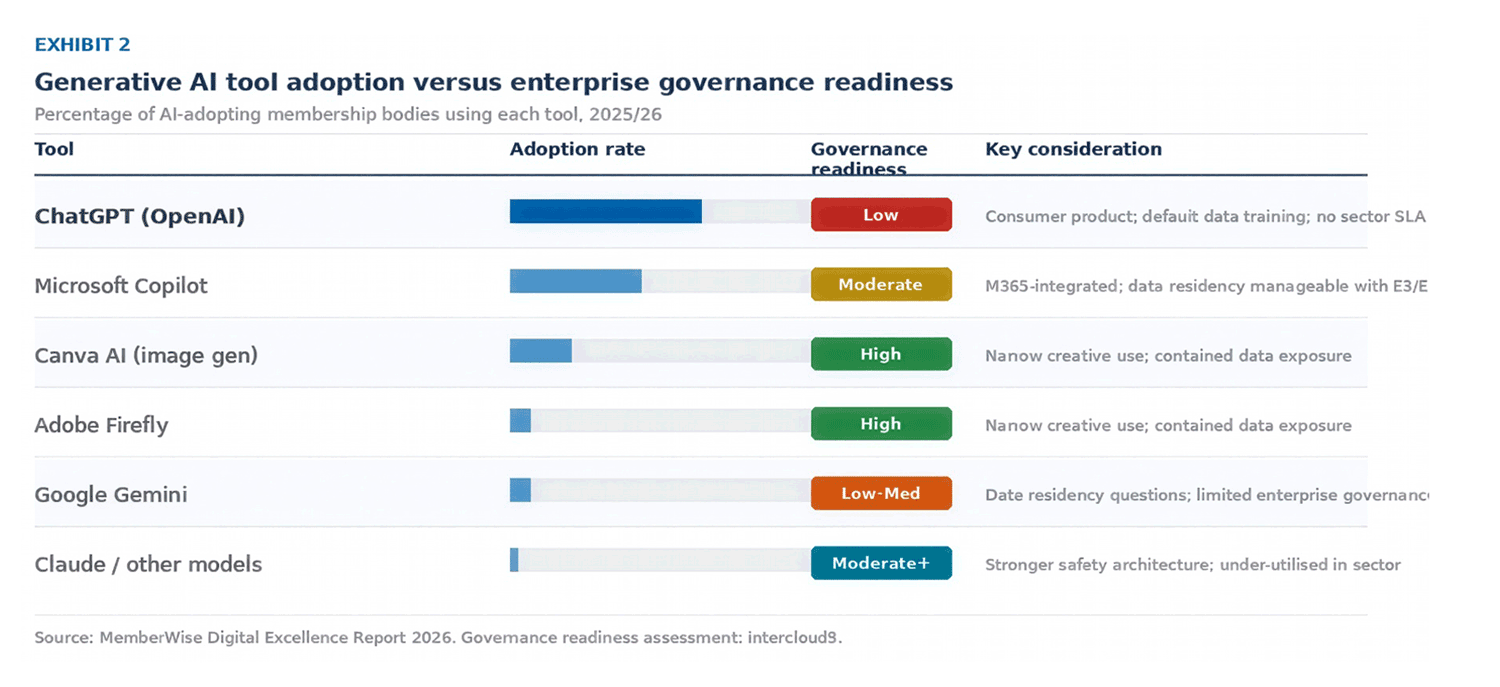

3. AI adoption at scale without governance infrastructure is a material organisational risk

AI adoption has increased from 5% to 26% of the sector in two years. The report compares this trajectory to early social media adoption. The analogy is useful, but not in the way it is perhaps intended: the sector’s social media journey was characterised by rapid adoption, followed by prolonged rationalisation as organisations discovered that tool deployment without strategic intent generated overhead rather than value. The AI trajectory is structurally similar – with stakes that are considerably higher.

Only 6% of organisations have a formal AI strategy. That means the majority are deploying AI tools – some of which, the report confirms, are being used for legal, compliance and policy document drafting – without a documented framework covering permissible use, data governance, accountability for outputs or escalation protocols. This is not a theoretical risk. It is current operational exposure.

The tool concentration picture compounds the risk significantly. 60% of AI-using organisations are using ChatGPT as their primary tool. Understanding what that actually means in practice – including where ChatGPT’s knowledge comes from – is important context for any organisation relying on it for professional or governance purposes.

The tool concentration picture compounds the risk significantly. 60% of AI-using organisations are using ChatGPT as their primary tool. Understanding what that actually means in practice – including where ChatGPT’s knowledge comes from – is important context for any organisation relying on it for professional or governance purposes.

A note on training data and what it means for reliability:

It is sometimes claimed that a very high proportion of ChatGPT’s knowledge derives from Reddit. The picture is more nuanced – and the accurate version is, if anything, more concerning than the simplified claim.

For GPT-3 – the foundational model on which ChatGPT was built – OpenAI’s own 2020 research paper documents that 22% of the training dataset came from ‘WebText2’: a corpus derived from the text of web pages linked in Reddit posts with three or more upvotes. The largest single component was Common Crawl – a broad web scrape – at 60%. Reddit was therefore not the majority source, but it was the second largest, and it served as a proxy filter for what counted as credible web content: if a link was shared and upvoted on Reddit, it qualified for inclusion.

The more current and operationally significant figure is different. As of mid-2025, Reddit accounts for approximately 40% of all citations in AI-generated search responses across major models including ChatGPT, Claude and Gemini – making it the single most referenced domain in AI outputs, ahead of Wikipedia at 26%. OpenAI formalised this relationship in May 2024 with a licensing agreement paying approximately £55 million annually for real-time access to Reddit’s data API. The implication is that Reddit is not merely a historical training artefact. It is an active, ongoing source shaping live AI responses.

Why this matters for professional bodies: Reddit’s demographics are young, male-skewed and heavily weighted towards technology and entertainment communities. Its content includes significant volumes of misinformation, unverified opinion and forum-style speculation alongside genuine expertise. For a professional membership body asking ChatGPT to draft a governance document, summarise a regulatory position, or respond to a member query about professional standards, the model’s outputs are being shaped – in part – by a platform whose epistemological standards are fundamentally different from those of a chartered professional institute. As of late 2025, OpenAI was reportedly reducing its reliance on Reddit as a training source precisely because of quality and misinformation concerns. The sector should not wait for OpenAI’s quality controls to catch up before assessing whether its own use of these tools is appropriate.

Several further structural concerns compound the concentration risk:

- ChatGPT is a consumer product. In its default configuration, user inputs may be used to improve the underlying model. For organisations handling member data, professional standards content or commercially sensitive strategy work, the data exposure implications have in most cases not been formally assessed. OpenAI does not apply its enterprise data protection terms to free or standard accounts.

- There is no sector-specific data protection SLA, no data residency guarantee appropriate to UK professional body obligations, and no contractual accountability for outputs used in governance or compliance contexts.

- Capability monoculture creates transition risk. Different large language models have materially different performance profiles: some are better suited to long-form synthesis and nuanced policy reasoning; others to code generation or structured data tasks. An organisation that has built familiarity with one consumer tool has not built AI capability. It has built dependency on one vendor’s product, pricing model and editorial decisions about training data.

- Consumer AI pricing is currently sub-commercial. OpenAI has raised prices substantially across its model tiers and the trajectory is upward as providers shift from user acquisition to margin recovery. Organisations with no viable alternative will have limited negotiating position when costs increase further.

The governance gap is the more urgent issue. The report confirms that AI is already embedded in legal, compliance and governance workflows in a proportion of organisations. Boards that have not asked the executive where AI is being used, what data it is accessing, who is accountable for its outputs, and whether the training provenance of that tool is consistent with the organisation’s professional standards obligations are operating with an incomplete risk picture.

4. Technology investment without strategic alignment is producing expensive incoherence

Most membership bodies are spending 2–5% of annual budget on technology – below the 6–10% level the report identifies as consistent with sectors that are successfully aligning investment with strategy. The quantum of underinvestment is significant, but it is not the primary concern. The more consequential finding is that only 14% of organisations have technology spend firmly aligned to a documented digital strategy with planned investments. The remaining 86% are investing on a project-by-project or reactive basis.

The structural consequence is visible in the member experience data. Organisations where different functions own different technology budgets – membership owning the AMS, learning and development owning the LMS, marketing owning the CMS – without coordinated oversight tend to procure systems optimised for departmental use rather than integrated member journeys. The report identifies this fragmentation directly as a source of poorly integrated solutions and inconsistent online member experience. It is not a technology problem. It is an organisational design and governance problem.

The integration cost of fragmented procurement: Ease of integration fell short of expectations for 59% of organisations that attempted it – up 11 percentage points from the prior cycle. This deterioration suggests that as the sector moves from straightforward two-system integrations to more complex multi-system architectures, the capability required to execute well is outpacing the capability being deployed. The cost of remediation consistently exceeds the cost of strategic planning at the outset.

A further signal worth noting: 10% of organisations confirmed they deferred long-term technology plans when more immediate investment requirements arose. In isolation this appears pragmatic. As a sector pattern, it is evidence of technology strategy that lacks board-level protection – meaning that the investments most important for sustainable capability development are consistently displaced by operational urgency.

5. Integration is improving in the established systems and stalling in the ones that matter most

The report’s positive integration story – AMS-website integration at 78%, up 5pp – is real progress. But it reflects the completion of a project the sector has been working on for over a decade. The more diagnostic signal is where integration is not improving.

LMS integration sits at 19%, flat from the prior cycle. Online community integration is similarly unchanged. These are the systems through which members access intellectual capital, develop professionally, connect with peers, and build the relationships that make membership feel indispensable. Their disconnection from the central member record means that an organisation’s most important behavioural data – whether a member is actively developing professionally, whether they are participating in the community, whether they are accessing the resources that justify their subscription – is invisible to the people responsible for retention and value strategy.

This is not a technology limitation. Every major AMS vendor in the sector now offers or supports LMS and community integrations. The barrier is resource allocation, internal prioritisation, and in many cases the absence of a digital strategy that would make the case for the investment compellingly. The 19% figure after seven years of sustained digital investment represents a strategic choice to defer, whether or not it has been framed that way.

- 47% of organisations now host an online community – up for the seventh consecutive year. The majority are using paid-for solutions. Yet community data is not feeding the member record or the personalisation layer in most cases.

- The shift away from free platforms (Facebook Groups at 5.5%, down from 16% in 2021) reflects growing maturity about the limits of third-party community infrastructure. The value of that shift is constrained while community data remains siloed.

Five questions for the boardroom

The MemberWise DX Report 2026 documents a sector that is broadly progressing. The five structural signals identified here are not evidence of failure. They are evidence of a maturation curve that is stalling in critical places – and that will compound if not addressed at the level of strategic governance rather than operational management. These questions are offered as a diagnostic for leadership and board conversations:

- Do we know, with confidence, whether our most valuable members are becoming more or less engaged – and do we have the behavioural data infrastructure and organisational accountability to intervene before lapse?

- Which AI tools are currently in active use across all functions of this organisation? What data are they accessing? Who is accountable for the governance of those tools, and does a formal AI policy exist?

- Is our total technology investment – across all budget holders – visible to a single owner, aligned to a documented digital strategy, and subject to coordinated governance at senior leadership level?

- Have we formally assessed the integration gap between our AMS and our LMS and community platforms, and is there a costed, time-bound plan to close it?

- Are we reporting acquisition metrics to the board alongside engagement cohort and renewal pattern data – and if not, what does our board-level governance actually tell us about the health of our membership?